25 Million Alerts. One Year of Real SOC Data.

What Intezer's 2026 AI SOC Report Reveals About Alert Fatigue, Triage Gaps, and the Future of Security Operations

Welcome to The Cybersecurity Pulse (TCP)! I’m Darwin Salazar, Head of Growth at Monad and former detection engineer in big tech. Each week, I bring you the latest security innovation and industry news. Subscribe to receive weekly updates! 📧

Intezer recently dropped their 2026 AI SOC Report. A full analysis of 25 million security alerts triaged across live enterprise SOCs in 2025. I read all 35 pages. Here’s what stood out, what it means, and what I think most security teams can take from it.

The math inside most SOCs is broken, and it has been for years. Too many alerts, not enough analysts, and the people in those seats are legit running on fumes. Burnt-out analysts and overworked IR teams don’t just slow down. They miss things. So teams triage aggressively, auto-close the low-severity stuff, and hope nothing slips through.

This has been true for decades. Detection engineers can tune rules and build enrichment playbooks all day, but the volume problem is structural. Industry surveys consistently show that over 60% of alerts are never reviewed. Not by in-house SOC teams. Not by MDRs. They get deprioritized or buried.

Intezer just put a number on what “slips through” actually looks like and the cost of the trade-off that most SOCs make.

The scale of the dataset alone sets this apart:

25 million alerts. 10 million monitored endpoints and identities.

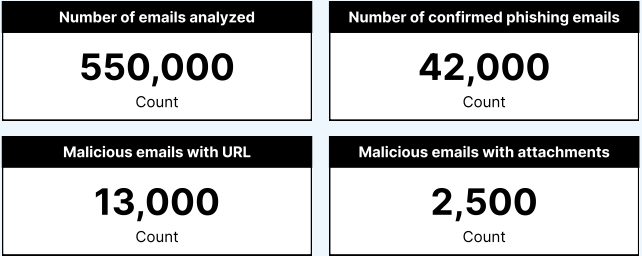

180 million files analyzed. 7 million IP addresses investigated. 550,000 emails analyzed.

Over 82,000 endpoint forensic investigations, including live memory scans.

This wasn’t a survey or a simulation. It’s operational data from production SOC environments like NVIDIA, MGM Resorts, and Equifax.

I spent years building detection rules and investigation playbooks, and I work with detection engineers and SOC teams daily at Monad. I know what’s supposed to happen after a rule fires. I also know what actually happens. This report quantifies that gap.

Why I’m Writing About a Vendor Report

Let me be direct. AI SOC is a crowded, noisy market. There are 25+ vendors selling some version of it right now. Most are glorified LLM wrappers that summarize alert metadata and call it “investigation.” If you’re skeptical of the category, you should be.

I’ve watched Intezer’s platform evolve over years. Where most AI SOC tools are pattern-matching on alert metadata and hoping for the best LLM-generated verdict, Intezer is doing actual forensic analysis. Deterministic, not probabilistic. Every verdict is fully auditable. And the analysis goes to forensic depth: live memory scans, genetic code analysis, actual endpoint investigation.

The framing matters too. Intezer isn’t selling “replace your analysts.” They’re selling “cover the 60% that was never getting covered.” That’s a fundamentally different value proposition.

But the report itself earned this writeup on its own merits. It’s genuinely educational in a way that leads me to recommend it to anyone from Jr.-level operators to CISOs. Take the section on plaintext password alerts (p.20). They don’t just flag “we found unencrypted credentials on the wire” and move on. They trace it to directory services still running unencrypted LDAP, transmitting credentials in cleartext because nobody configured LDAPS or StartTLS. That level of precision tells you the people who wrote this have actually done the work. It’s a great refresher or learning track for pretty much any level.

And it puts numbers behind things practitioners have said for years but could hardly prove. “Most impossible travel alerts are false positives.” “Low-sev alerts are hiding real threats.” “EDRs aren’t as reliable as we think.” Now there are receipts.

The Math Nobody Wanted to See

Of everything in this report, this is the finding I keep coming back to.

Nearly 1% of low-severity and informational alerts turned out to be real threats

That sounds small until you do the math. The average enterprise generates around 450,000 alerts per year. Over 60% never get reviewed. At that scale, 1% of low-severity alerts being real means roughly 54 genuine threats per year that nobody investigates. That’s about one per week (!!)

On endpoints it’s worse. 2% of low-severity endpoint alerts were confirmed incidents. Active, real, and invisible to the teams responsible for catching them.

Intezer ran over 82,000 forensic scans on endpoints throughout the year, including live memory analysis. In 1.6% of those scans, the endpoint was still actively compromised even though the EDR had reported the threat as mitigated. The EDR closed the case. The analyst moved on. The attacker was still there.

And over half of all endpoint alerts were never automatically mitigated by endpoint protection in the first place. Of those non-mitigated alerts, roughly 9% were confirmed malicious.

There could be legitimate explanations. Vendor quirks, timing issues, partial remediation. But the operational reality doesn’t change: if you’re trusting your EDR’s “mitigated” status without verification, you have a blind spot.

As someone who built detection rules and playbooks that generate these exact alert types, I know the gap between the intended workflow and what actually happens. The detection fires, the alert enters the queue, and the math takes over. Low-severity stuff gets deprioritized or auto-closed.

That’s the real value of AI-driven triage. Not replacing analysts. Doing forensic-grade analysis on the alerts that were going to be ignored anyway. Finding the threats that may slip through.

Where Attacks Are Actually Evolving

Three patterns jumped out from the threat data. None of them are what most vendors are selling against.

Phishing Doesn’t Need Your Endpoint Anymore

Intezer analyzed 550,000 user-reported phishing emails. Less than 8% were confirmed malicious. But look at how those malicious emails actually breakdown: less than 6% carried an attachment. Around 30% relied on a link.

The attack surface has moved. Endpoints and inboxes are well-defended, and attackers know it. So they’re keeping the entire kill chain inside the browser, where most security stacks have minimal visibility.

Intezer found platforms like Vercel, CodePen, JSitor, and JSBin hosting live credential-harvesting pages on trusted domains. Phishing sites impersonating crypto wallets and exchanges, asking victims for recovery phrases. Legitimate developer infrastructure being used as disposable phishing hosting. Most threat intel feeds won’t flag these domains because the domains themselves aren’t malicious.

Microsoft was the most impersonated brand, representing nearly a third of all phishing URLs. Microsoft and DocuSign together accounted for almost 85% of brand impersonation. Brand impersonation was the top phishing technique at 28.7%, with callback scams right behind at 25.3%.

Attackers are also increasingly gating phishing pages behind Cloudflare’s Turnstile CAPTCHA. The majority of URLs using Google reCAPTCHA were safe. That ratio completely flipped for Cloudflare Turnstile. Attackers are using CAPTCHAs not to stop bots, but to stop security scanners from seeing what’s behind the page.

Identity Is Drowning in Noise

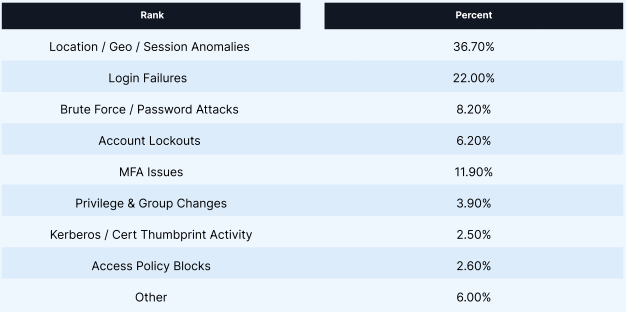

Location and geo anomalies made up over 36% of all identity alerts. Login failures accounted for 22%. But 74.1% of login verdicts were classified as likely benign. The “impossible travel” alerts that flood every SOC queue? Only about 2% were confirmed real compromises.

Roughly 30% of those alerts were caused by VPN activity. Mobile phones routing through distant data centers and overlapping security tools triggering alerts against each other accounted for most of the rest. The identity layer has become the highest-noise signal source in the SOC, and separating real compromise from normal variability at scale is one of the hardest unsolved problems in the space. I imagine this problem gets worse with the explosion of Non-Human Identities (NHI).

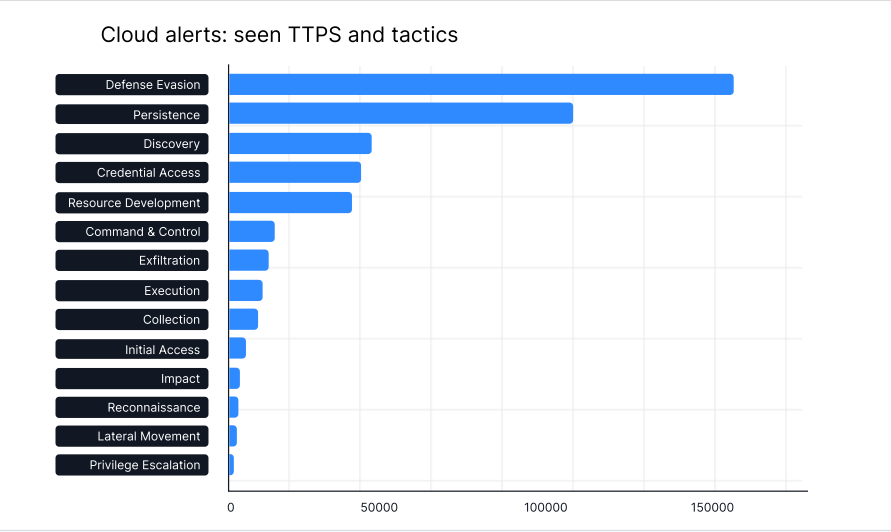

Cloud Attackers Are Playing the Long Game

Defense evasion and persistence dominated cloud TTPs. Attackers are getting in (usually through identity) and sitting quietly. Token manipulation, obfuscation, abuse of legitimate cloud features etc. The goal is long-term access + recon, not immediate impact.

S3 accounted for roughly 70% of all AWS control violations. Buckets still using ACLs instead of IAM policies, missing server access logging, overly permissive cross-account policies.

I spent years at Accenture and Datadog building the CSPM rules that flag these exact misconfigurations. The fact that they’re still this prevalent in 2026 tells you everything you need to know.

What to Actually Do About It

Invest in making your team AI-fluent, and seriously evaluate AI SOC platforms. LLMs are great for log analysis, triage assistance, detection rule development, playbook drafting. But for alert triage, the build vs. buy math almost never favors build. Reviewing every alert across every tool, investigating regardless of the original verdict, at forensic depth (live memory scans, genetic code analysis, full auditability)? That’s years of engineering, not a side project. The teams that figure out how to pair human judgment with AI speed at that level are the ones that will actually close the coverage gaps this report quantifies.

Stop trusting your tooling’s verdicts blindly. Real threats are hiding in low-severity alerts and EDRs are reporting “mitigated” while endpoints remain compromised. If your team can’t cover the full alert volume manually, that’s a strong case for AI-augmented triage.

Reassess your phishing defense model for browser-based attacks. The attack surface has shifted from attachments to links, from endpoints to browsers. If your phishing defense stack is primarily focused on catching malicious files, you’re defending last year’s battlefield. The code sandbox abuse and callback scam patterns in this report deserve your immediate attention.

Audit your cloud posture against what you’re actually running, not what the docs say. S3 misconfigs aren’t news. But 70% of all AWS violations concentrating there suggests most organizations still haven’t done the unglamorous work of cleaning up notoriously bad configurations.

The Full Report

I don’t say this often about vendor reports: go read the whole thing here. It’s 35 pages, it’s technical, and it respects your intelligence.

If you’re a CISO, send it to your SOC lead. If you’re a SOC lead, send it to your analysts. If you build detections, you’ll find data in here that validates half the arguments you’ve been losing in meetings.