Welcome to The Cybersecurity Pulse (TCP)! If you’re serious about staying at the forefront of the latest in the cybersecurity industry, make sure to hit the “Subscribe” button below to get my insights delivered straight to your inbox 📩

Cybersecurity Beyond GenAI

Over the past year, Generative Artificial Intelligence (GenAI) has stolen the show as the most widely adopted type of AI in history. GenAI has had an undeniable impact on society and has changed the world forever. In cybersecurity, the introduction of GenAI has been a double-edged sword. It has enabled malicious actors to carry out sophisticated phishing campaigns at higher rates1,2 while acting as a force multiplier for criminal hacker groups. On the other hand, GenAI has seen numerous use cases for defense, including security advisors, code review, and Natural Language Processing (NLP) to code generation. I wrote a post earlier this year that dives into more use cases if you'd like to dive further into this area.

However, GenAI is just the appetizer. As AI and Machine Learning (ML) become increasingly integrated into cybersecurity, they open doors to even more groundbreaking possibilities. This may sound like something out of a sci-fi novel, but imagine a world where ultra-intelligent autonomous agents could single-handedly stop cyber attacks, conduct threat hunts, and/or harden your systems based on new intel.

This is where Autonomous Cyber-defense Agents (ACA) come into play. ACAs are self-sufficient agents capable of whatever they’re trained and fine-tuned to do. Given the time, cost, and talent constraints in our industry, this is an extremely attractive proposition. The burning question is whether we have the technical capability to build commercial, production-grade autonomous agents.

This is what we’ll explore today, and spoiler alert: it seems we're getting closer and closer to a major breakthrough.

What is Deep Reinforcement Learning (DRL)

"Cyber-defense agents will stealthily monitor the networks, detect the enemy cyber activities while remaining concealed, and then destroy or degrade the enemy malware. They will do so mostly autonomously, because human cyber experts will be always scarce on the battlefield. They have to be capable of autonomous learning because enemy malware is constantly evolving. They have to be stealthy because the enemy malware will try to find and destroy them. At the time of this writing and to the best of our knowledge, autonomous agents with such capabilities remain unavailable." - Source: Autonomous Intelligent Cyber-defense Agent Reference Architecture (AICARA) Release 2.0 - 20193

Fortunately, the last sentence in the above statement is becoming less and less true every day due to advancements in machine learning, specifically in DRL.

DRL is an advanced area of machine learning that combines deep learning and reinforcement learning principles. In this approach, an agent learns to make decisions by interacting with its environment. The agent receives feedback through rewards or penalties based on its actions. This feedback helps the agent to understand which actions lead to better outcomes.

In cybersecurity, DRL becomes a game-changer as it is what makes ACAs possible. It forms the foundation that will eventually enable systems to autonomously detect and respond to threats by learning from their interactions with network and system logs, telemetry, threat intel. etc. For example, a DRL agent can learn to identify patterns of different attacks, adapt to threats, and take actions to mitigate them without human intervention. Without DRL, there would be no ACA.

The History of ACAs

The concept of ACAs has been around for a long time. Back in 2016, the same year AlphaGo beat the world's best human player, DARPA organized the Cyber Grand Challenge. In this event, computers independently engaged in attack and defend scenarios. However, the competition saw very little use of machine learning. The strategies used were mostly human-directed and lacked true machine autonomy.4

Since then, it seems like the U.S. military and its allies have been hard at work in developing ACAs, which is evidenced by the 154-page AICARA report released by NATO Research Task Group (RTG) IST-152. In this report, the research group highlights ACAs applications for cyber warfare and goes in depth on the architecture and technical capabilities they found necessary to be able to create highly intelligent cyber defense agents. However, they concluded that military-grade (pun intended) ACAs were not feasible back in 2019 due to technical limitations.

Fast forward to 2023…

Are We There Yet: The Latest Research on ACAs

Earlier this year, researchers at the Department of Energy's Pacific Northwest National Laboratory (PNNL) made significant strides in cybersecurity by constructing an abstract simulation of the conflict between attackers and defenders within a network by using an OpenAI gym sandbox and DRL. They trained four different DRL neural networks, with the primary objective of the networks being to maximize rewards by preventing compromises and minimizing network disruptions.

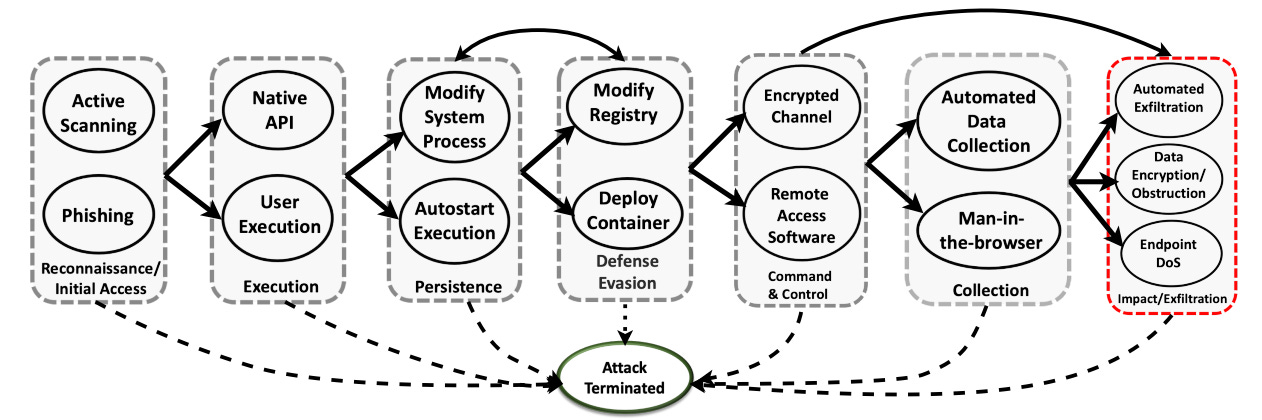

In this simulation, the attacker agents were programmed to use a subset of tactics and techniques from the MITRE ATT&CK Framework, including seven (7) tactics and fifteen (15) techniques. The defender agents were equipped with twenty-three (23) mitigation actions to counteract the attackers' moves and contain the attack. The defender agent’s focus was not on preventing initial access, but instead, it was programmed to assume the attacker had already infiltrated the network (aka assume breach). This allowes the defender agent to focus on preventing further attack progression, such as lateral movement, execution, defense evasion, and exfiltration, among other tactics.

A bit more on how the two agents were programmed and trained:

The Adversary Model was designed to simulate the behavior of cyber attackers. It outlined a step-by-step progression from initial recon to its ultimate goal of causing negative impact to critical systems or extracting data (exfiltration). In this model, each successful technique executed by the attacker enabled them to advance to the next phase. The model was flexible and adapted its approach in response to the defensive tactics they encountered. Central to this model was the assumption that attackers must exploit vulnerabilities that are either yet to be discovered or cannot be patched to advance their attack. The model also accounted for the possibility of an attack being terminated if the attackers could not bypass effective defensive measures. In short, the adversarial model was highly intelligent and always considered the various forward paths available to it.

The Defense Model’s main objective was to proactively prevent the attackers from achieving their impact or exfiltration goals while ensuring minimal disruption to legitimate system operations. This model emulated some challenges faced by defenders, such as the difficulty in predicting the attackers' next step and operating with imperfect or incomplete information. To address these challenges, the defense model incorporated the use of systems that monitor API calls to determine the attackers' current position within the network. The model was trained not to take this information at face value because it might not be entirely accurate or comprehensive as is similar to the real-world limitations of today. In short, the defender agent considered a number of key factors, including the fact that it may have an incomplete picture before making its next move.

The performance of the defender agent and the different DRL variants tested highlight that we’re getting closer to production-grade ACAs. The Deep Q-Network (DQN) variant of their agent performed best out of the 4 DRL algorithms they tested. For the least sophisticated attacks, DQN stopped 79% of attacks midway through the attack stages while stopping 93% by the final stage. It stopped 57% midway and 84% by the final stage for the most sophisticated attacks. These results are fairly astounding considering that most of today’s breaches would fall under the “least sophisticated” bucket, for which this ACA would stop 93% of attacks on which it was trained. I’m not sure how this compares to humans in the same scenario, but I’d assume that ACAs take the cake on this one.

Samrat Chatterjee of PNNL says that the team's goal is to "create an autonomous defense agent that can learn the most likely next step of an adversary, plan for it, and then respond in the best way to protect the system,”.5 This sounds eerily similar to what the NATO RTG IST-152 described in their cyber warfare scenario in stopping malware from spreading.

The report concludes by stating that “future work will include developing DRL-based transfer learning approaches within dynamic environments for distributed multi-agent defense systems.” Given that these agents may be ephemeral and can be effectively neutralized by attackers, it makes a ton of sense for them to focus their next phases of research on real-time knowledge transfers between agents.

Looking Ahead

The world has changed a lot since the AICARA report came out in 2019, and there have been significant technological advancements since then, as evident by the PNNL research. Though purchasing a few ACAs for distinct use cases to augment your security teams may be 3-5 years away, it’s important to keep an eye on this space as it will truly change the cybersecurity landscape forever. I won’t enumerate them here, but the potential benefits are endless. However, we must also consider the adverse effects and how attackers may leverage such agents to carry out even more sophisticated attacks.

References

Below are my footnote references:

https://www.msspalert.com/news/email-cyberattacks-spiked-nearly-500-in-first-half-of-2023-acronis-reports

https://siliconangle.com/2023/08/15/new-reports-show-phishing-rise-getting-sophisticated/

https://apps.dtic.mil/sti/pdfs/AD1080471.pdf

https://cset.georgetown.edu/wp-content/uploads/Autonomous-Cyber-Defense-1.pdf

https://arxiv.org/pdf/2302.01595.pdf

https://github.com/Limmen/awesome-rl-for-cybersecurity