TCP 129: Vercel Breach, Mythos Leak, the SIEM arms race, and 3 Defender 0 days

OAuth grant abuse, leaked models, and $89M chasing the SIEM of the future

Welcome to The Cybersecurity Pulse (TCP)! I’m Darwin Salazar, Head of Growth at Monad and former detection engineer in big tech. Each week, I bring you the latest security innovation and industry news. Subscribe to receive weekly updates! 📧

AI signals in email attacks: Boosting detection and threat hunting

Most AI “tells” in email are noisy and unreliable on their own. But used correctly, they can still strengthen detections and improve threat hunting.

In Sublime Security’s upcoming webinar, we break down which AI signals actually work, how to apply signal stacking, and where these approaches fail. See real examples and a practical framework you can use immediately.

Howdy 👋 - Hope you’re having a great week wherever you’re tuning in from!

This has been a wild week. Anthropic’s Mythos model apparently accessed by a unauthorized 3rd party, Vercel breached via 3rd party OAuth abuse, Lovable exposure kerfuffle, 3 0-days found in MSFT Defender and security stocks rebound. And of course, there’s more marinating in the background (case in point: Cyera announces acq. of Ryft just as I was about to press ‘send’ 🙃).

Before we dive in, two plugs:

If you’re a security operator in NYC, come hang out this upcoming Monday (Apr. 27th) at this live show I’m co-hosting with Intezer at the Nasdaq. Security leaders from Salesforce, Oscar Health, and many highly regarded folks you probably know. Truly a can’t miss event!

I wrote a thing on the contents of and detection opportunities for OpenAI Enterprise Audit Logs. Read it here.

Cool. Now let's get into it!

TL;DR ✏️

🕳️ Mythos allegedly accessed via third-party vendor — Discord group reportedly combined contractor creds with URL patterns from the Mercor breach; story still developing

🧨 Vercel breached via Context.ai OAuth grant — Roblox cheat → Lumma Stealer → inherited “Allow All” token → prod env vars; campaign bigger than Context.ai alone

🧱 GitHub’s agentic workflows threat model — assume the agent is compromised; secretless execution, safe-outputs pipeline, damage-containment over prevention

🤝 Cyera acquires Ryft to extend its agentic AI data security play — Fourth acquisition for Cyera (now $9B val, $1.7B raised); deal size undisclosed

🎭 Lovable’s BOLA + disclosure meltdown — a few API calls → any user’s source code and DB creds; “fixed” only for projects created after Nov 2025

🪟 Three MSFT Defender 0-days, two still unpatched — BlueHammer (CVE-2026-33825) patched, RedSun and UnDefend live with public PoCs and in-the-wild exploitation

📡 Detection engineering for OpenAI Enterprise audit logs — Log set deep dive + 5 detection opportunities

📧 Sublime webinar: AI signals in email attacks — Alex Orleans and Luke Wescott on threat hunting with AI signals, signal stacking, and where they break; May 13

🏹 Artemis out of stealth with $70M — AI-native SIEM contender, Felicis-led, angels from Demisto and Abnormal founders

🧭 Spectrum exits stealth with $19M — AI-powered detection engineering layer on top of existing SIEMs, led by TechOperators and Skinos (Shlomo Kramer’s new fund)

💊 Capsule Security exits stealth with $7M — runtime trust layer for AI agents; disclosed ShareLeak (CVE-2026-21520) and PipeLeak alongside launch

Plus: Aikido ships Endpoint Protection for developer workstations, Microsoft publishes KQL for hunting DPRK IT workers in Workday and DocuSign, Cowbell launches a mid-market policy with affirmative AI and quantum coverage, and more 👇

⚒️ Picks of the Week ⚒️

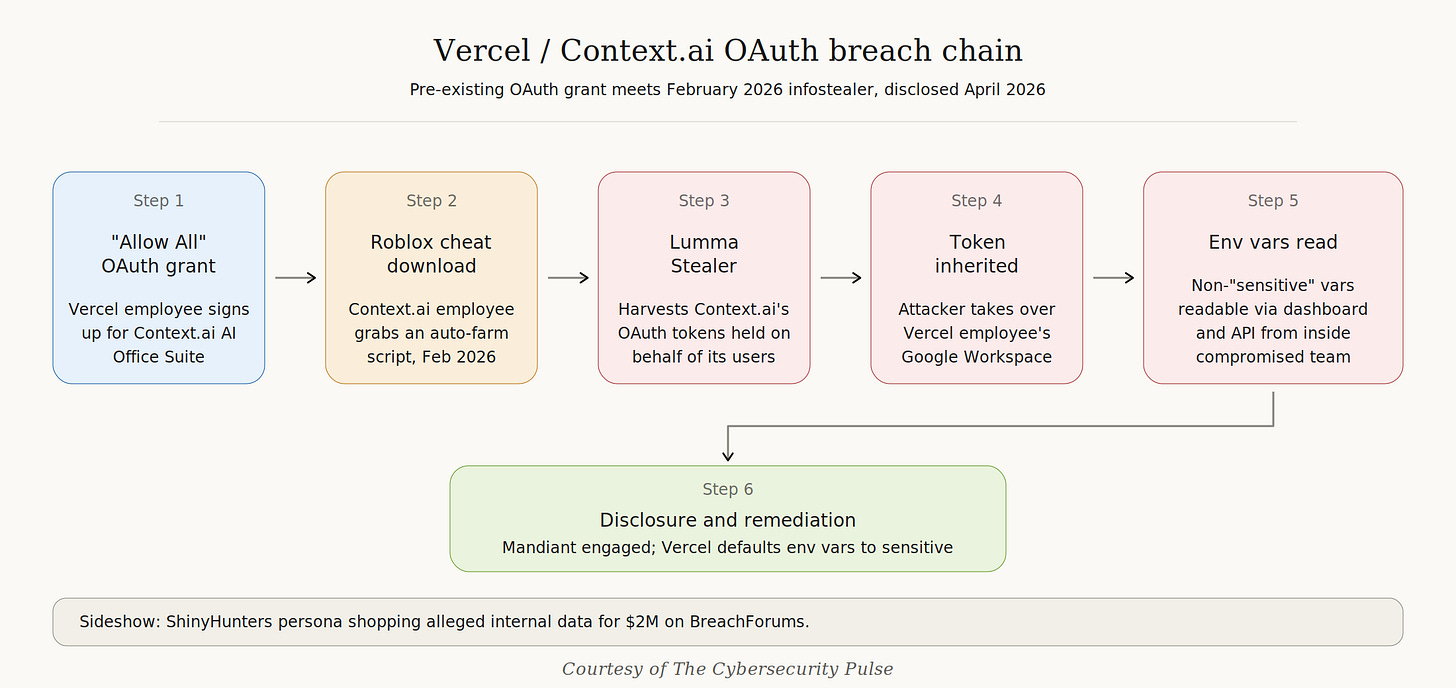

Vercel breached via Context.ai x GWS OAuth grant

A Vercel employee connected Context.ai’s AI Office Suite to their enterprise Google Workspace and granted “Allow All” permissions. When Context.ai got popped (a Context.ai employee caught Lumma Stealer in February 2026 , reportedly from a Roblox cheat download of all things), the attacker inherited that OAuth grant and walked right into Vercel.

From there they escalated by sifting through environment variables not marked as “sensitive,” (😔) which were sitting in plaintext in the dashboard and API. Mandiant is engaged, and Vercel now defaults env vars to sensitive. No npm packages were tampered with, they say.

Also a ShinyHunters persona is shopping alleged internal data for $2M, but Google TAG’s Austin Larsen called the claimant “likely an imposter.”

No AI-powered Mythos nuke. Just 3rd party OAuth token grant abuse similar to what happened with Salesloft Drift. It’s exactly what Pat Opet, CISO @ JP Morgan, warned about almost exactly a year ago.

Update: Vercel disclosed on April 22 that it identified additional compromised accounts from independent prior compromises, and CEO Guillermo Rauch said the threat actor appears to be running a broader token-harvesting campaign beyond Context.ai. Context.ai has deprecated the AI Office Suite entirely.

HugOps to my friends on the Vercel security team.

Mythos allegedly leaked via third-party vendor, story still developing

Per TechCrunch, a group of Discord users claim to have gained unauthorized access to Anthropic’s Claude Mythos Preview on the same day it was announced, reportedly by combining compromised credentials from a third-party contractor with URL naming conventions reconstructed from a recent data breach at Mercor. Anthropic confirmed it’s investigating and said there’s no evidence its own systems were impacted or that activity extended beyond the vendor environment.

A ShinyHunters impersonator has since tried to take credit circulating AI-generated screenshots as proof, but security researcher Dominic Alvieri and others quickly called the claim out as fabricated.

Story is still developing, more to come as Anthropic’s investigation plays out, but if even part of this holds up it’s detrimental to the whole walled-off Project Glasswing model.

How Did We Miss This?

Every security leader has asked this at some point. Usually after an incident review, usually at 11pm, usually over cold pizza. And the answer is almost always the same: detection gaps nobody had time to map, rules nobody could write fast enough, and coverage that quietly broke weeks ago without firing a single alert. AI-powered attacks are just making that gap harder to ignore.

Spectrum is built for that exact problem. An AI-powered detection platform that maps your coverage, researches emerging threats, and writes custom, deployment-ready detections against your data, wherever it lives. The stuff your team would write if they had ten more hours in the day.

Fewer gaps, fewer blind spots, fewer “how did we miss this” moments.

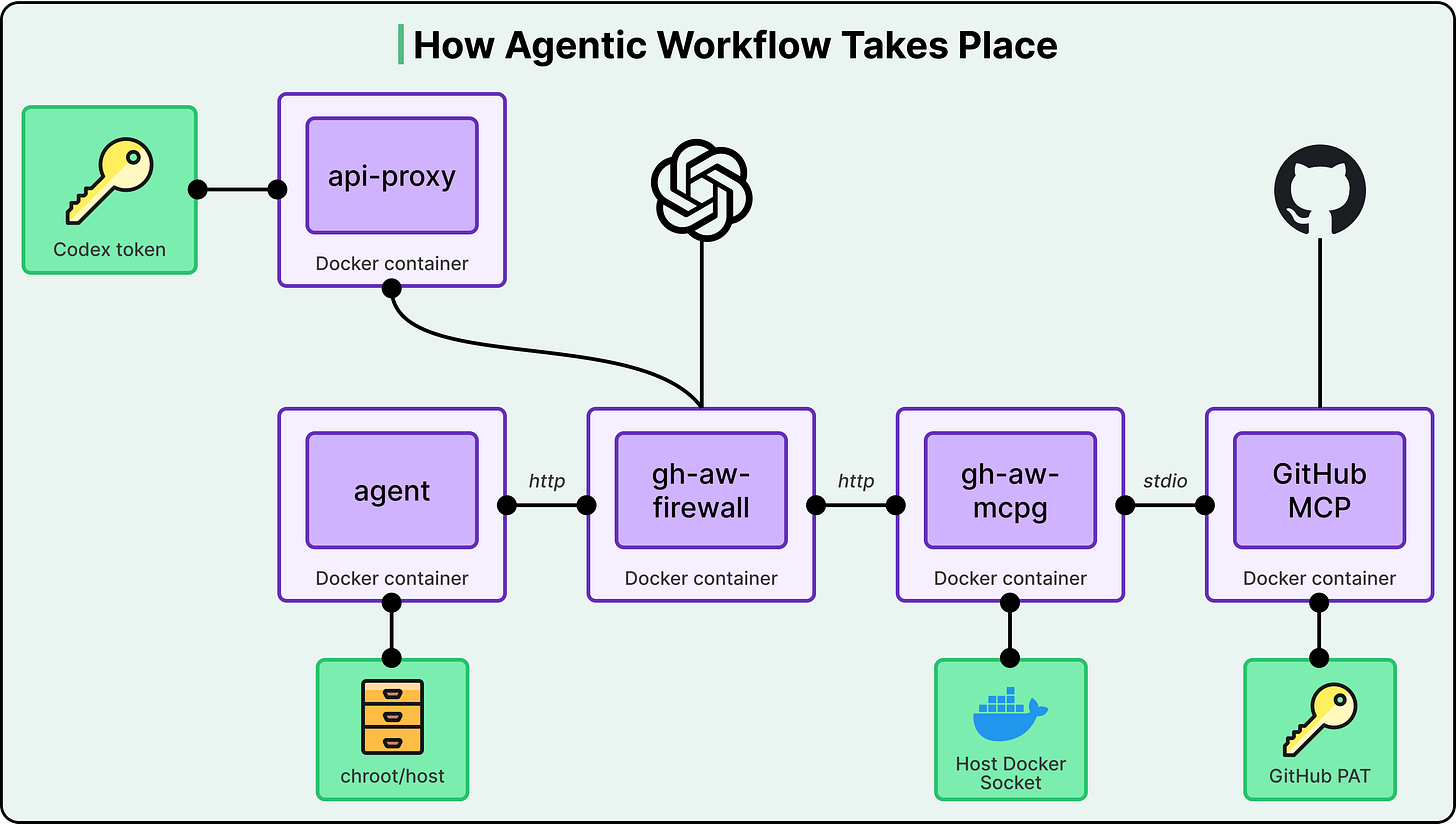

GitHub’s security architecture for agentic workflows: assume the agent is compromised

GitHub published the threat model and architecture behind their Agentic Workflows, and it’s the most informative write-up I’ve seen on running agents in CI/CD pipeline. Shoutout to ByteByteGo for this one. Highly recommend reading if you’re using AI coding tools in an enterprise environement.

One core assumption they’ve made is that the agent will try to read and write state it shouldn’t, communicate over unintended channels, and abuse legitimate channels. In other words, the agent will misbehave.

Three-layer architecture (substrate, configuration, planning), with the agent running secretless: MCP auth tokens live in a separate gateway container, LLM tokens in an isolated proxy, all traffic through a dedicated firewall container. Every agent output goes through a deterministic “safe outputs” pipeline that checks operations against an allowlist, enforces quantity limits, and scans for leaked secrets before anything gets committed.

The “secrets are physically unreachable from the agent” pattern transfers well beyond GitHub and is a great example of “contain damage” vs. “stop breach” approach.

Detection engineering for OpenAI Enterprise audit logs

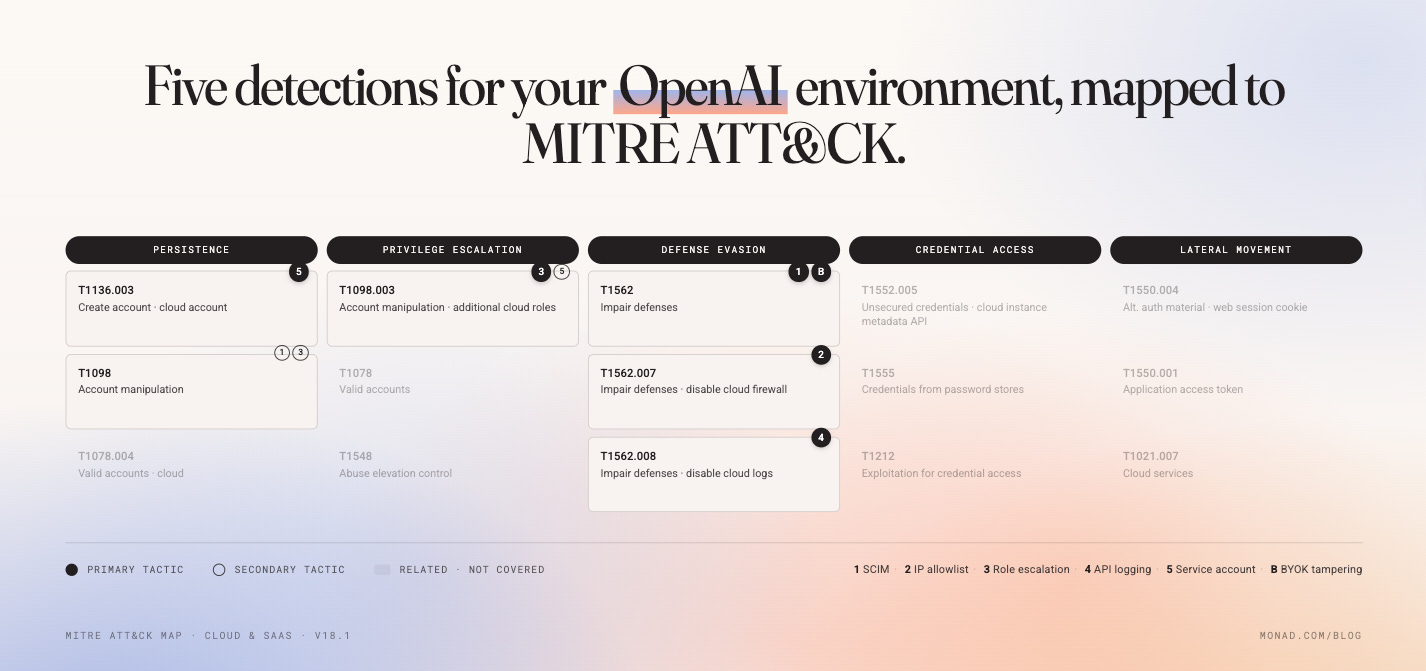

I dropped a new post on the Monad blog walking through the 5 detections worth shipping first against OpenAI’s enterprise audit log (51 event types, immutable once enabled). The starter pack: SCIM disabled, IP allowlist deactivated or broadened, role changes with permissions_added, api_call_logging reduced, and owner-level service account creation. Plus a bonus on external key (BYOK) tampering

Same playbook as the Claude Code OTel work we put out earlier this month. As AI platforms become control planes in your stack, their audit logs need to be treated more like cloud or identiy logs.

If you’re running enterprise ChatGPT, this blog should serve as a great starting point for understanding the log source + getting some coverage on high signal activity.

Cyera Acquires Ryft to Extend Its Agentic AI Security Platform

As I was getting ready to press ‘send’, I learned that Cyera announced its acquisition of Ryft, a secure data lake startup founded in 2024 and backed by Index Ventures and Bessemer Venture Partners. Deal terms were not disclosed. This is Cyera’s fourth acquisition in five years, following Trail Security ($162M, Oct 2024) and Otterize (June 2025).

The thesis as co-founder and CTO Tamar Bar-Ilan calls it “unified control plane” for agentic AI, fusing data discovery, classification, DLP, identity, and now AI-ready data lake infrastructure in one platform.

Cyera is valued at $9B per their last raise. Most companies stagnate around this point. Will be fun to see them continue cooking.

Lovable’s BOLA and how not to handle disclosure

A researcher (@weezerOSINT) showed that any free Lovable account could make a handful of API calls and walk away with another user’s source code, chat history, and database credentials.

Root cause: a textbook Broken Object Level Authorization (BOLA) flaw, OWASP API #1. The project endpoints verified the auth token but never checked if the user actually owned the resource. Reported through HackerOne on March 3rd and marked as a duplicate.

The worst part: Lovable shipped a fix in March, but only for projects created after November 2025. Every pre-existing project stayed wide open. New projects returned 403, legacy projects returned 200 OK with full data.

Then the PR arc: “did not suffer a data breach” → blame documentation → blame HackerOne → apologize for the apology. They also casually dropped that public project code visibility is “intentional behavior” and “by design.”

$6.6B valuation, nearly 8M users, enterprise customers including Uber, Klarna, Deutsche Telekom, and Zendesk, and the #1 item on the OWASP API Top 10 is sitting in production.

The wild part is the response: deny, gaslight, then blame HackerOne for closing a ticket your own triage team owns.

Spectrum exits stealth with $19M for AI-era detection engineering

SF-based Spectrum Security launched from stealth with $19M in seed funding led by TechOperators, with participation from WhiteRabbit Ventures, Skinos Ventures (the new fund from Shlomo Kramer and Yishay Yovel), and Alumni Ventures.

The platform sits on top of existing SIEMs, data lakes, and EDRs to automate detection authoring, continuously find coverage gaps, and maintain detection health as log schemas and infrastructure drift.

The team is stacked (Ex leadership at Siemplify, Opus Security, operators at Appian etc) are attacking the same problem most detection eng teams have, rules that quietly break when infrastructure shifts, coverage gaps nobody mapped, drift nobody noticed.

Backed by Nir Polak (Exabeam founder) and Kevin Skapinetz (TechOperators), this is a strong detection-engineering bet especially as the ground shifts from under us in the SecOps space.

Three Defender zero-days in the wild, two still unpatched

A researcher, Chaotic Eclipse, dropped three Defender Antivirus zero-days after beefing with Microsoft over disclosure: BlueHammer, RedSun, and UnDefend. BlueHammer and RedSun are local priv-esc; UnDefend is a DoS that kills Defender’s security definition updates. Only BlueHammer (CVE-2026-33825) is patched. The other two are still live with public PoCs on GitHub (yes, Microsoft-owned GitHub lol). Huntress reported BlueHammer exploitation starting April 10, with RedSun and UnDefend PoC activity kicking off April 16.

The disclosure drama is pretty wild. Per Chaotic Eclipse, MSRC required a video demonstration of the exploit before they’d triage the report, then dismissed the case when the researcher declined. Microsoft later said video demos are not a requirement for disclosure, which raises the obvious question of who told the researcher they were.

UnDefend is the sneakiest of the three because silently killing definition updates means the box looks healthy in your console while going blind in real time. A stark reminder that the software meant to secure your org could also be vulnerable. Also a reminder for software vendors to patch their shit when researchers surface findings. Don’t gaslight or brush it under the rug as it may very likely come back to bite and cost more than the initial fix would’ve cost.

🔮 The Future of Security 🔮

AI Security

Capsule Security Exits Stealth With $7M to Secure AI Agents at Runtime

Capsule Security, founded by Naor Paz (ex-F5, Unit 8200) and Lidan Hazout (ex-Transmit Security), exited stealth with $7M in seed funding led by Lama Partners and Forgepoint Capital International.

The platform sits in the agent execution path and blocks unsafe tool calls, manipulation, and data exfiltration at runtime, with support for Cursor, Claude Code, Copilot Studio, ServiceNow, and Salesforce Agentforce. Alongside the launch, Capsule disclosed two agent-platform vulnerabilities, ShareLeak in Copilot Studio (now tracked as CVE-2026-21520 and patched) and PipeLeak in Salesforce Agentforce.

More AI Security News:

Endpoint Security

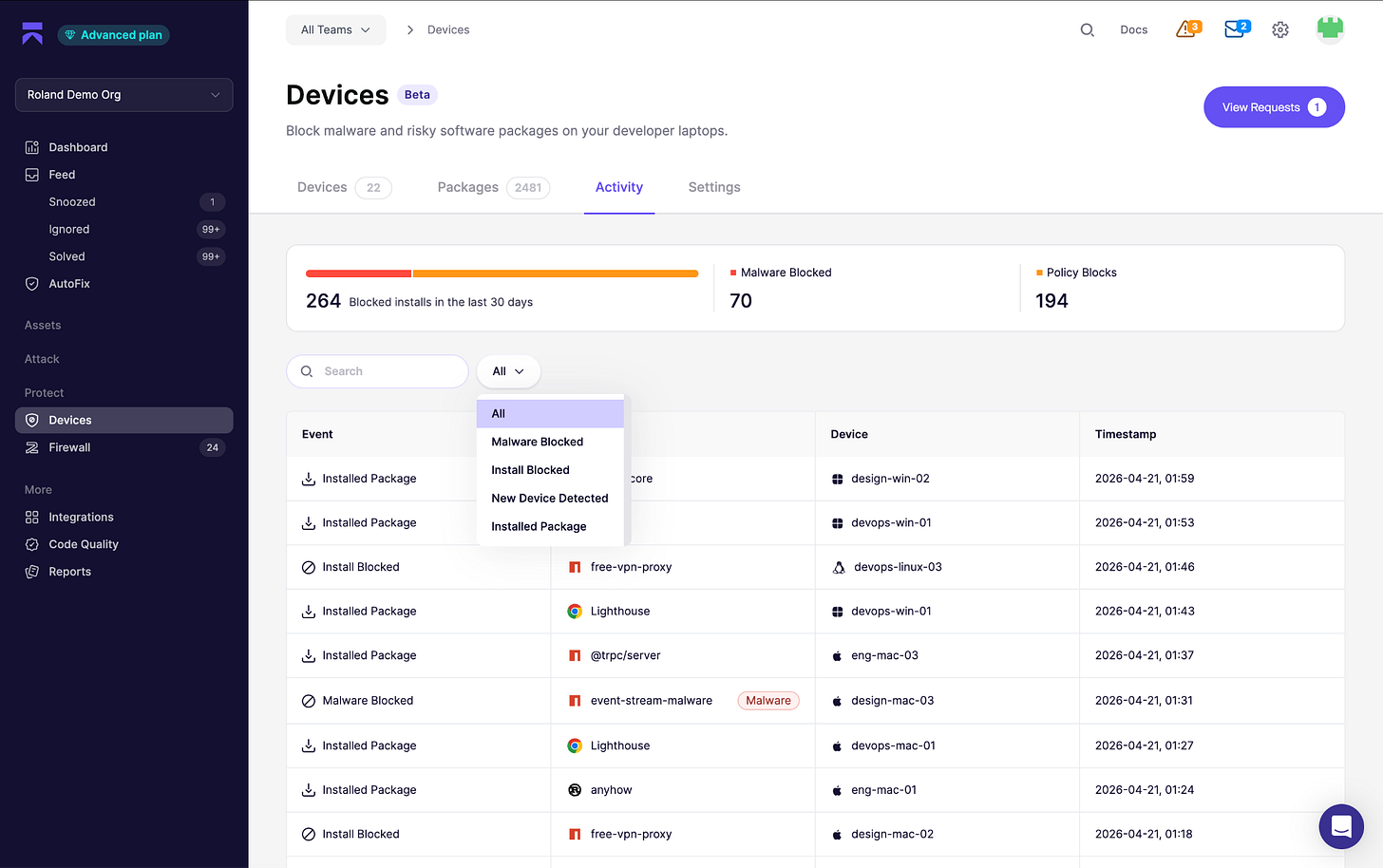

Aikido Launches Endpoint Protection for Developer Devices

Aikido launched Endpoint, a lightweight agent for dev workstations that inspects packages, IDE extensions, browser extensions, MCP servers, and AI tools before installation. Built on their open source Safe Chain project.

Notable default: any package published less than 48 hours ago gets held back in favor of the most recent version that clears the age policy, killing the highest-risk window right after a compromised version ships.

Dev laptops are the softest spot in most orgs. Devs have local admin, install whatever they want, run MCPs calling APIs with prod creds, and hit npm/PyPI a hundred times a day. Most traditional EDR doesn’t understand any of that telemetry. The 48-hour age policy is the kind of simple heuristic that would have blocked a big chunk of recent supply chain attacks (Axios staged its dropper less than 24 hours before the compromised versions pulled it in, per Aikido’s own writeup). The endpoint space is getting crowded, but the developer-first and SMB-friendly approach is a true differentiator.

Insurance

Cowbell Debuts Prime One Cyber Insurance with AI and Quantum Risk Cover

Cowbell Cyber launched Prime One, a cyber insurance product targeting mid-market companies ($250M-$1B revenue) with up to $10 million in coverage limits.

The policy includes affirmative coverage for AI-related incidents and quantum computing risks, positioning itself ahead of the post-quantum cryptography curve. 4D PR play, imo.

SaaS Security

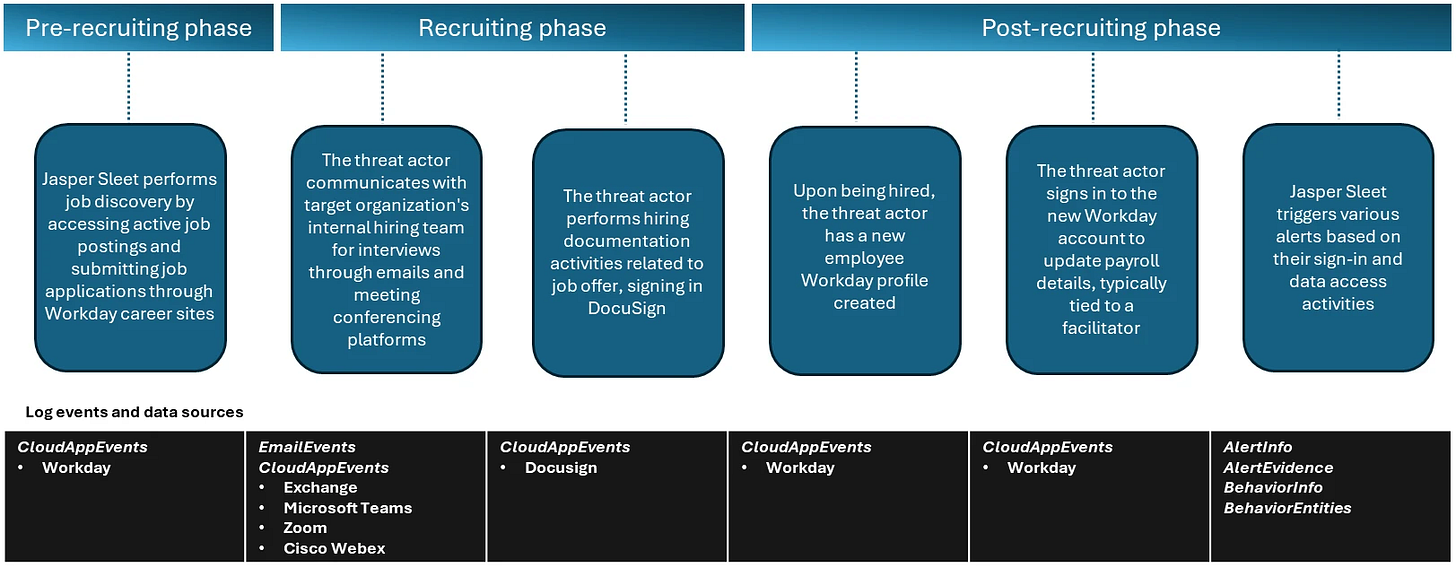

Microsoft drops detection strategies for North Korean IT worker

MSFT published KQL and detection logic for Jasper Sleet, the DPRK-aligned actor posing as legit hires with AI-assisted fake personas. Ready-to-ship queries for Workday, Teams, Zoom, Webex, and DocuSign in the post.

Probably the biggest novel piece from it all is that they’re recommending to hunt the pre-recruitment phase. They’ve observed Jasper Sleet hitting Workday’s /hrrecruiting/* API endpoints from known actor infrastructure in a consistent, repeating pattern across multiple external accounts , which looks different from a normal applicant.

The fact that you need to detect DPRK operatives inside your HRIS in 2026 is wild but here we are. What’s cool about this post is that it shifts the detection surface left of the endpoint entirely. Your SaaS audit logs (Workday, DocuSign, Zoom) are threat hunting surfaces now, not just compliance logs. If you’re not piping HR platform telemetry, that could be a big gap.

Security Operations

Artemis emerges from stealth with $70M to fight AI-powered attacks with AI

Artemis came out of stealth last week with $70M in combined seed and Series A funding, just six months after founding. Series A led by Felicis with First Round and Brightmind returning, plus angels from the founders of Demisto and Abnormal AI, the former Splunk CEO/CTO, and execs from CrowdStrike, Palo Alto, Microsoft, and Okta. Nice cap table.

The pitch is a data model built from each customer’s telemetry, fusing log data with business context to generate tuned detections, investigate alerts, and surface correlated attack stories. Early customers include Mercury, Wix, Lemonade, and Abnormal AI.

There have been quite a few SIEM contenders that have come out of stealth in the past year. Each has a slightly differentiated approach. I like Artemis’ pitch because it’s adaptive and built off of the reality of a customer’s environment as opposed to handing off great software to customers and expecting them to realize the full value of it.

My main question is can they get in front of the CrowdStrikes, Palos, and Splunks of the world who are all striving toward the same narrative with way more distribution? In any case, great funding, founding team and backing. SecOps space is a fun one!

Interested in sponsoring TCP?

Sponsoring TCP not only helps me continue to bring you the latest in security innovation, but it also connects you to a dedicated audience of 20,000+ CISOs, practitioners, founders, and investors across 135+ countries 🌎

Bye for now 👋🏽

That’s all for this week… ¡Nos vemos la próxima semana!

Disclaimer

The insights, opinions, and analyses shared in The Cybersecurity Pulse are my own and do not represent the views or positions of my employer or any affiliated organizations. This newsletter is for informational purposes only and should not be construed as financial, legal, security, or investment advice.