The end of bug bounties? Is DEF CON canceled forever? Mythos, OAI TAC, and More

Catching up on Mythos, Glasswing, GPT-5.4-Cyber, and why MCP's biggest vulnerability is working exactly as intended

Welcome to The Cybersecurity Pulse (TCP)! I’m Darwin Salazar, Head of Growth at Monad and former detection engineer in big tech. Each week, I bring you the latest security innovation and industry news. Subscribe to receive weekly updates! 📧

Level Up: Security Under Pressure Challenge

Join Abnormal’s 5-game cybersecurity challenge inspired by real behavioral attack signals. Step into fast-paced, human-layer scenarios where instinct and speed decide the outcome.

Win prizes after every game—and complete all 5 games to automatically earn an entry into the Grand Prize Draw for a chance to win two tickets to the FIFA World Cup 2026*! (*Subject to Terms & Conditions)

Howdy 👋 - Hope you’re having a great week wherever you’re tuning in from!

I’m just getting back from some much needed time off in Costa Rica. Apparently something called Mythos happened and it nuked the security industry so we can all retire and start a new life now? Does this also mean DEF CON is canceled?

Just kidding but it does beg the question of “what comes next”? I cover that in the first story of the week. Before we get into that though, a few announcements:

Abnormal AI is hosting a security challenge that if completed, enters you for a chance to win 2 tickets to the FIFA WORLD CUP! 🤯

Claude Code Detection Opportunities blog my Monad teammates cooked up🔥

Monad recently crossed 300+ integrations. Depth + width. Massive milestone.

April 27th at the Nasdaq in NYC w/ Intezer for the AI SOC Live Show. Lining up to be a special event. Request an invite here!

Cool. Now, onto this week’s news!

TL;DR ✏️

🧬 Anthropic’s Claude Mythos + Project Glasswing — thousands of ‘zero-days’ across every major OS and browser; 50 orgs get restricted access, $100M in credits, and a whole lot of industry debate

🧙🏼♂️ OpenAI Fires Back With GPT-5.4-Cyber — fine-tuned defensive model with binary RE capabilities and lowered refusal guardrails, gated through expanded Trusted Access for Cyber program

🐙 OX Security Exposes Systemic MCP Protocol Flaw — architectural RCE across all 10 language SDKs, 200K+ exposed servers, 10+ CVEs; Anthropic calls it “by design”

📄 CSA/SANS Ship Emergency Mythos Strategy Briefing — 30-page playbook from 60+ contributors including Easterly, Gadi Evron, Rob Joyce and more

🛑 HackerOne Pauses Internet Bug Bounty Program — AI-driven discovery overwhelms open source maintainers; remediation is now the bottleneck and bounties don’t fund it

Plus: Mallory exits stealth with AI-native threat intel, Cisco nearing $250M+ Astrix acquisition, Wiz launches shadow data detection, GitHub publishes 2026 Actions security roadmap, Block details agentic code review architecture, Cloudflare expands Agent Cloud, and a whole lot more👇

⚒️ Picks of the Week ⚒️

Catching up on Anthropic’s Claude Mythos, OpenAI’s GPT-5.4-Cyber, and is DEF CON canceled?

If you were away last week like me, then you may have missed the internet and cyber stocks melting down due to some Anthropic news. While I hate beating a dead horse, I think this is a baby horse who’s just getting started so I’ll bring you up to speed a bit with continued coverage as this all evolves.

Anthropic launched private access to Claude Mythos Preview, an unreleased frontier model it says can find and exploit software vulnerabilities better than all but the most elite human researchers. Rather than release it publicly, Anthropic launched Project Glasswing, giving restricted access to roughly 50 organizations, backed by $100M in usage credits. As you read through what comes next, take it with a grain of salt. Anthropic is a for-profit company.

Mythos TLDR:

Launch partners include AWS, Apple, Microsoft, Google, CrowdStrike, Palo Alto Networks, JPMorganChase, NVIDIA, and the Linux Foundation

Autonomously discovered thousands of zero-days across every major OS and browser

Found a 27-year-old OpenBSD vuln and a 17-year-old FreeBSD RCE

Anthropic says it has no plans to release Mythos Preview publicly

OpenAI’s response: GPT-5.4-Cyber

Yesterday (a week later), OpenAI dropped GPT-5.4-Cyber, a fine-tuned variant built for defensive security work.

Fine-tuned with lowered refusal guardrails for legit security tasks

Adds binary reverse engineering capabilities not in standard GPT-5.4

Access gated through their expanded Trusted Access for Cyber (TAC) program with tiered identity verification

Codex Security has contributed to fixes for over 3,000 critical and high-severity vulns since broader launch

Industry response:

CSA, SANS, OWASP, and [un]prompted assembled a 30-page emergency strategy briefing over a single weekend with 60+ contributors including Gadi Evron, Jen Easterly, Bruce Schneier, Chris Inglis, and Rob Joyce

Paper introduces the concept of a “Mythos-ready” security program and frames VulnOps as a permanent organizational function

Core recs: reinforce segmentation, egress filtering, phishing-resistant MFA, right-size IAM, tabletop exercises for multiple simultaneous critical-severity incidents

According to the Zero Day Clock, mean time from disclosure to exploitation has fallen to under 1 day in 2026, down from 2.3 years in 2019

What I think

There’s a fair bit of pessimistic skepticism across the industry on these matters. How much of this is a PR play in the attention economy we’re living in vs. true cause for going haywire on making your security program “Mythos-ready”?

The answer to me is somewhere in the middle leaning towards the latter and yes, security programs should have been focusing on supply chain security and RemediationOps for the past few decades. AI has just lit a fire under these initiatives but the fundamentals still remain the same. Reduce attack surface, right-size IAM permissions, harden, strong ACLs, layered defenses esp. for crown jewels, detect, respond etc etc. AI has made achieving all of this 5x harder but it’s still all mostly the same activities we used to do 10 years ago. In that sense, I get why some folks are calling this nothing burger.

However, security programs thrive on being paranoid aka being prepared. It’s healthy to see the industry rally around initiatives like the CSA report. Attackers esp. nation-states that are carrying out attacks on critical infra and Fortune 500s+, have access to capabilities some of us couldn’t even imagine. The window of exploitation from when a vuln is disclosed or when a software pkg is compromised has shrunk tremendously and AI is the main culprit.

There’s a lot of shit on fire in our industry right now. TeamPCP, Scattered Spider, Lap$us and many nation-states are wreaking havoc across the board. If there’s ever been a time to come together as an industry, it’s now.

Dig Deeper:

Don't just fix security breaches. Prevent them.

Security breaches are rising, and a detection-first model creates an added burden as teams manually remediate errors that should have been prevented. CNAPP platforms provide you visibility to what’s misconfigured, but they need a partner to prevent problems upfront.

Turbot pairs with your CNAPP to introduce a prevention-first model that blocks errors in build, at the cloud APl, and auto-remediates in runtime. Use PSPM (Preventive Security Posture Management) to cut your alerts in half.

OX Security Documents Systemic RCE Risk in Anthropic’s MCP Protocol Design

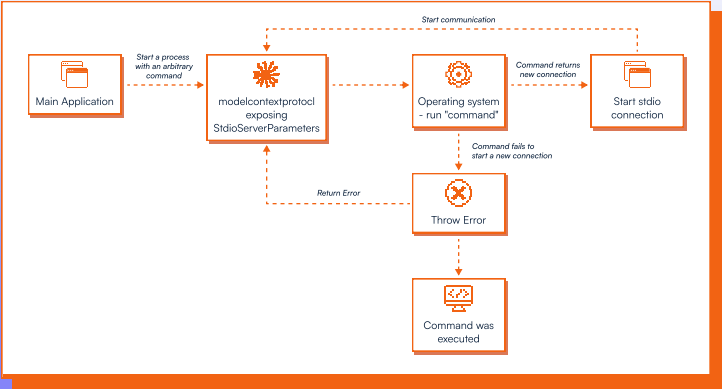

OX Security published a 30-page research report detailing a class of command execution vulnerabilities rooted in how Anthropic’s Model Context Protocol handles STDIO transport.

The core finding: MCP’s process execution logic accepts user-supplied commands and passes them directly to the OS with no input sanitization. Even when a malicious command fails to initialize a valid MCP server, the command still executes. The flaw is present across all 10 supported language SDKs (Python, TypeScript, Java, Kotlin, C#, Go, Ruby, Swift, PHP, Rust) and produced 30+ responsible disclosures and over 10 CVEs rated Critical or High.

The most severe findings involve publicly deployed instances. LangFlow (owned by IBM) had 915 publicly accessible servers on Shodan exposing unauthenticated RCE via STDIO MCP config, no login required. OX achieved authenticated RCE on Letta AI production servers via transport-type substitution. These are real, exploitable attack paths. OX also uploaded a proof-of-concept malicious MCP server to 9 of 11 MCP marketplaces without challenge, the same class of supply chain risk as malicious npm/PyPI packages, now applied to MCP.

Anthropic did not fix the vulns and stated: “This is an explicit part of how stdio MCP servers work and we believe that this design does represent a secure default.” LangChain and FastMCP echoed the same stance. OX’s counter: protocol maintainers should take responsibility for secure defaults rather than pushing input sanitization onto every downstream implementer.

While these findings aren’t a fire drill, I think it highlights what we’ve been hammering for a while. MCP and agents are overprivileged by default and even frontier model labs are susceptible to design and code flaws. Great findings and stellar report by the OX team.

What Happens in the Browser Doesn't Stay in the Browser

The reality is that most people are pasting sensitive data into ChatGPT/Claude, clicking phishing links mid-workflow, and running browser extensions nobody's ever vetted.

All of that happens in the browser, and most security tooling isn't even looking there. Keep Aware is a great tool I recently came across that sits at the browser layer, giving you visibility into GenAI usage, native DLP controls, and real-time phishing prevention at the point of click. If you're not watching the browser yet, you're missing the biggest attack surface employees use every day.

AI-Led Remediation Crisis Prompts HackerOne to Pause Bug Bounties

HackerOne suspended new submissions to its Internet Bug Bounty program as of March 27 because AI-assisted discovery is flooding open source maintainers with more vulns than they can actually remediate. The whole bug bounty model was built for a world where finding bugs was the hard part, but AI flipped that completely. Now remediation is the bottleneck and bounties don’t fund it. This is a big signal that the economics of crowdsourced vuln research are breaking under AI pressure and the industry needs to start funding the fix, not just the find. Crowdsourced RemediationOps anyone? Lol… Security demand has only grown due to AI imo.

How Block Builds In-House Code Review with Agents

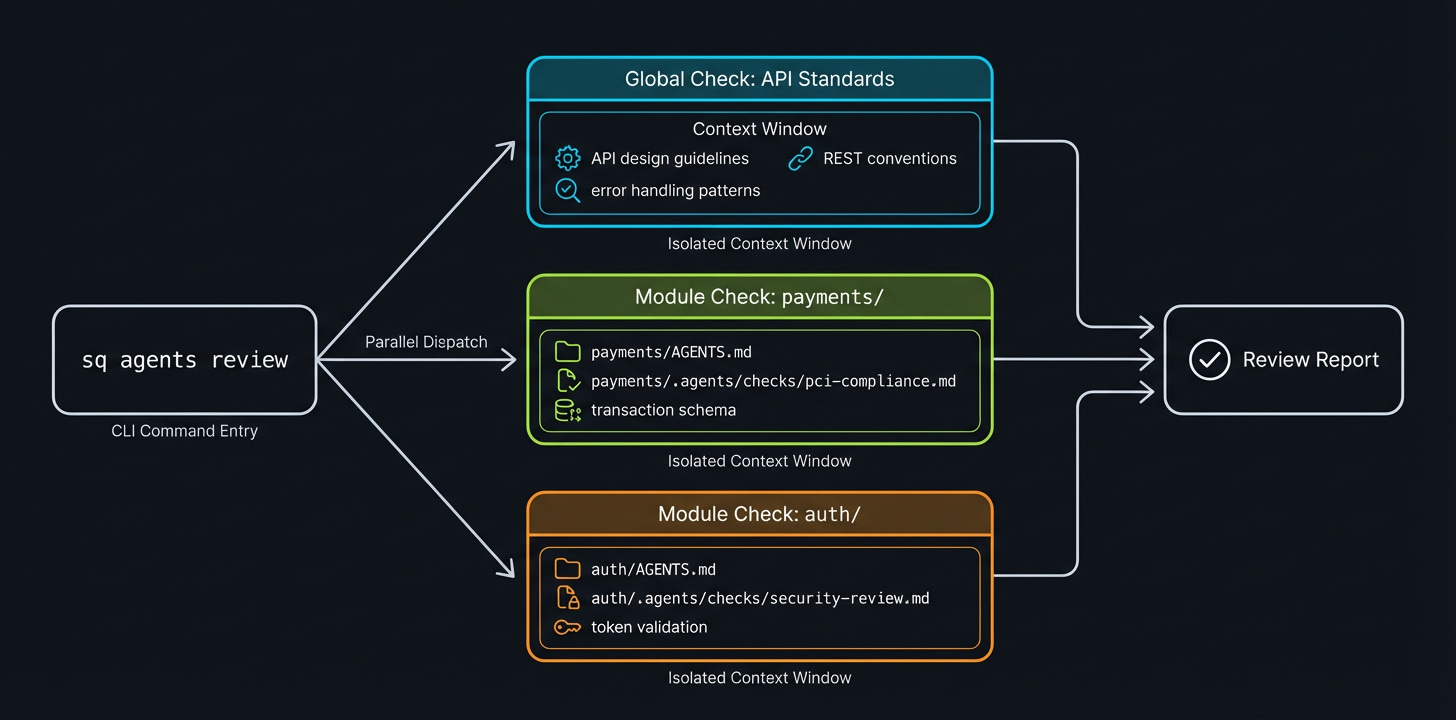

Block’s engineering team built AI “protector” agents (their tool Builderbot) that act as always-on code review guardians. They use standardized CLI contracts via Just, nested AGENTS.md files, and Amp’s Code Review Checks pattern to feed agents hyperlocal module-level context while keeping everything aligned to a global architectural “world model.” Yet another data point on build v. buy in security!

Mallory Exits Stealth With AI-Native Threat Intel🔥

Mallory, founded by Jonathan Cran (Intrigue founder (acq. By Mandiant), is now GA and out of stealth. Mallory is an AI-native threat intelligence platform that monitors thousands of threat sources and contextualizes them against an organization’s actual attack surface.

It’s the next gear for threat intel as it produces prioritized, evidence-based cases mapped to what’s exploitable within your environment, covering hunt, detection, and exposure management. The platform ships with native support for Claude Code, MCP, and API integrations. Mallory announced a seed round led by Decibel Partners with participation from Live Oak Venture Partners and angels from Google, Robinhood, Cisco, Fastly, and GreyNoise. No funding amount disclosed

Kudos to JCran! One of the nicest and smartest guys in the security industry. Check out this video/live demo with him and MattJay from VulnU!

🎙️Inside the Network with Christina Cacioppo, Vanta CEO

The Inside the Network crew (Sid Trivedi, Ross Haleliuk, Mahendra Ramsinghani) sat down with Vanta co-founder and CEO Christina Cacioppo for a conversation on her journey and building Vanta into a $4B+ valuation company.

To me, Christina is top 5 founders in the security (+adjacent space). Down to earth, brilliant, and relentless so this pod was a treat.

Worth a listen for anyone building a security company. Also kudos to the ITN crew. One of the best and most consistent pods in security right now.

🔮 The Future of Security 🔮

AI Security

Cloudflare Expands Agent Cloud With Sandboxed Runtimes and Persistent Compute

Cloudflare expanded its Agent Cloud platform with infrastructure designed to move AI agents from local demos to production workloads.

Key releases include Dynamic Workers, an isolate-based runtime that executes AI-generated code in sandboxed environments at 100x the speed and a fraction of the cost of containers; Artifacts, a Git-compatible storage layer supporting tens of millions of repositories for agent-managed code; and Sandboxes, which give agents access to persistent Linux environments with shell, filesystem, and background processes.

More AI Security News:

Application Security

GitHub Publishes 2026 Actions Security Roadmap After Supply Chain Attacks Multiply

GitHub laid out its 2026 Actions security roadmap in response to a wave of supply chain attacks targeting CI/CD automation, including incidents hitting tj-actions/changed-files, Nx, and trivy-action.

The roadmap introduces workflow-level dependency locking (think go.mod for Actions), policy-driven execution controls via rulesets that restrict who can trigger workflows and which events are allowed, scoped secrets that bind credentials to specific execution contexts, and a native Layer 7 egress firewall for hosted runners. Most capabilities are targeting public preview within 3-6 months.

Long overdue: write access to a repo will no longer grant secret management permissions.

Cloud Security

Intruder Brings Agentless Container Image Scanning to Midmarket Teams

Intruder added container image scanning to its cloud security platform, integrating directly with AWS ECR, Google Cloud Artifact Registry, and Azure Container Registry to scan images daily without deploying agents.

Data Security

Wiz Targets Stale and Redundant Cloud Data With New DSPM Module

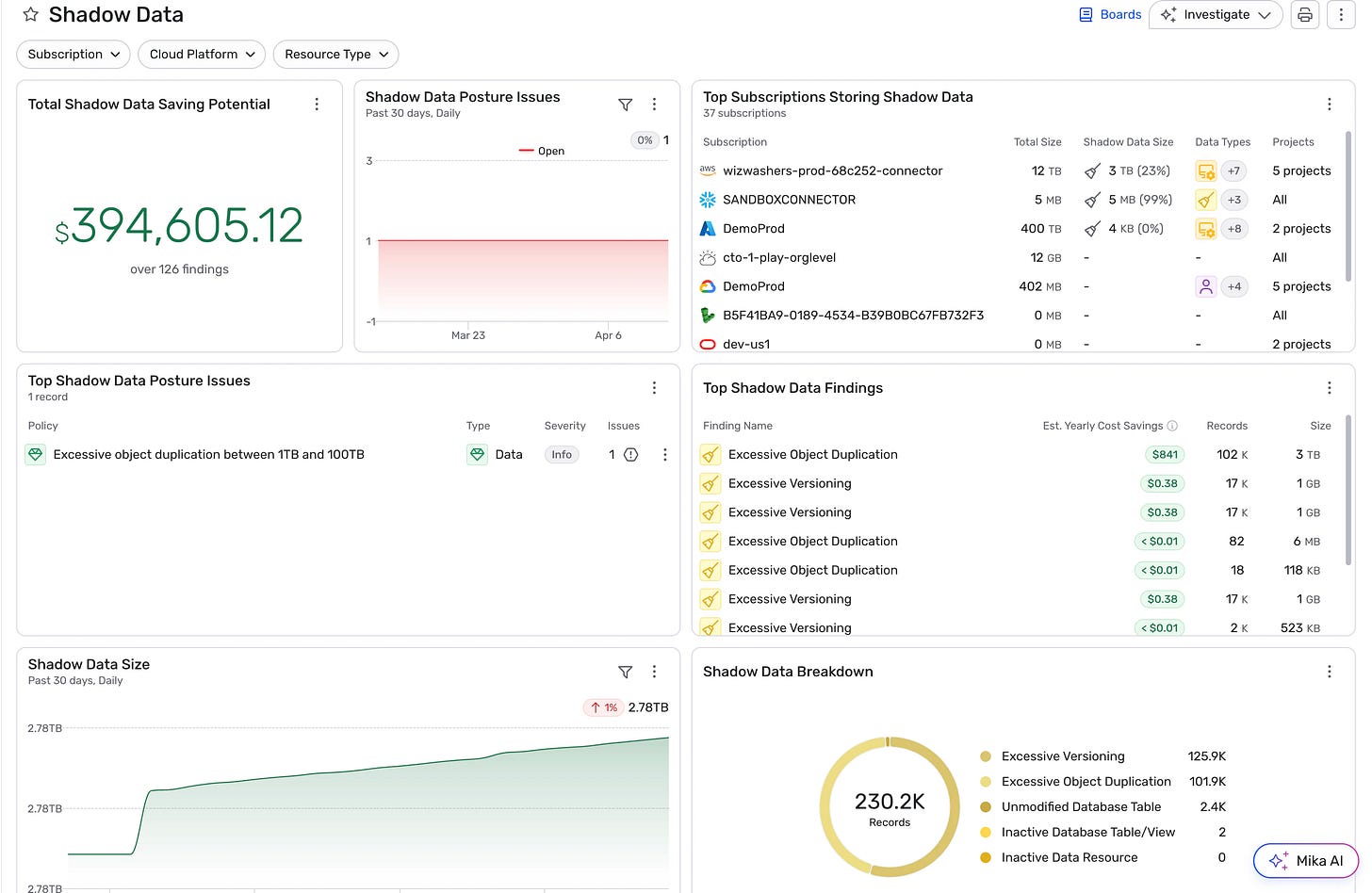

Wiz released Shadow Data Detection as part of its DSPM platform, designed to identify stale, duplicated, and over-retained data across cloud storage. The feature analyzes cloud provider inventory reports to flag redundant objects, excessive versioning, and orphaned data, then surfaces estimated cost savings and exposure reduction in a centralized dashboard. Across early customer environments, Wiz identified over 1 exabyte of data in storage buckets, with a significant portion classified as redundant.

Identity and Access Management

Cisco Reportedly Nearing $250M+ Deal for AI Agent Security Startup Astrix

Cisco is reportedly in talks to acquire Astrix Security for between $250M and $350M, roughly 3x the startup’s total funding to date. Astrix discovers AI agents, MCP servers, and non-human identities across enterprise environments, then scans for misconfigurations like overprivileged service accounts or publicly exposed agent endpoints. The platform includes JIT access controls that auto-expire agent credentials. This would be Cisco’s second AI security acquisition in a week, following the Galileo Technologies deal for its hallucination firewall, and signals Cisco is all in on AI security and observability.

Interested in sponsoring TCP?

Sponsoring TCP not only helps me continue to bring you the latest in security innovation, but it also connects you to a dedicated audience of 20,000+ CISOs, practitioners, founders, and investors across 135+ countries 🌎

Bye for now 👋🏽

That’s all for this week… ¡Nos vemos la próxima semana!

Disclaimer

The insights, opinions, and analyses shared in The Cybersecurity Pulse are my own and do not represent the views or positions of my employer or any affiliated organizations. This newsletter is for informational purposes only and should not be construed as financial, legal, security, or investment advice.

I didn't find the piece about DEF CON. Did I miss something?

❤️